Failover Protection

Modern AI applications depend on upstream model providers that can experience outages, regional incidents, throttling, or network issues. In practice, this often shows up not just as hard downtime but as frequent rate limiting (429s) or capacity errors that need to be mitigated quickly to keep user-facing latency and error rates under control. Without a clear failover strategy, these issues quickly surface as user-facing errors or timeouts in your product.

MultiRoute is designed to give you defense in depth, with an explicit focus on trust and honesty about failure modes:

- Client-side failover in the SDK, so calls can keep working even if

api.multiroute.aiis temporarily unavailable. - Platform-level routing and fallbacks, so traffic is automatically steered across providers and models when any one of them is degraded.

No one can guarantee 100% uptime for any API, including MultiRoute itself. The platform is built for very high availability, but the SDK’s client-side failover gives you a last line of defense when the control plane is having a bad day, so you are not betting your entire application on a single point of failure.

This page explains how these layers work together and how to take advantage of them safely.

Why failover matters

When you depend on a single provider or model:

- Any provider outage becomes an outage for your app.

- Regional incidents or networking issues can increase latency or cause timeouts.

- Rate limiting or capacity constraints can cause spikes in 429/5xx errors.

Failover protection reduces the blast radius of these events by:

- Allowing requests to be retried against alternate models or providers.

- Keeping application code simple—you do not have to hard-code provider-specific logic.

- Giving you visibility into when and why failovers were triggered.

MultiRoute’s goal is to maximize availability without forcing you to rewrite your app every time your model mix changes.

Real-world provider failures

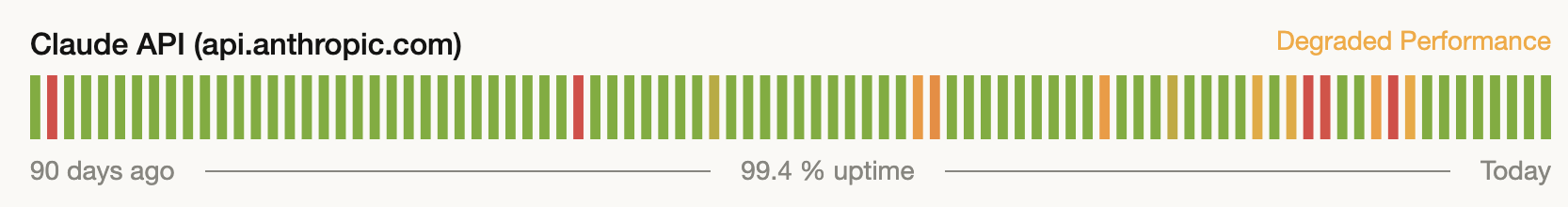

The need for failover is not theoretical. Even best-in-class providers show visible periods of degraded performance and elevated error rates over a typical 90-day window.

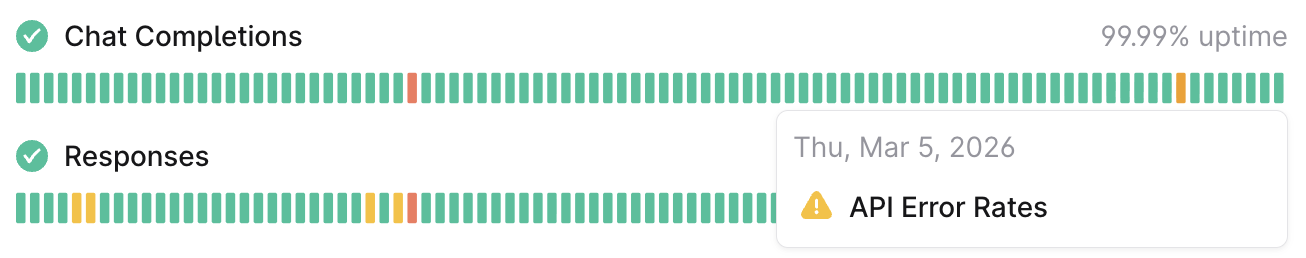

For example, OpenAI’s status history shows occasional spikes of API errors and partial brownouts:

Anthropic’s status history tells a similar story, with intermittent degraded performance events:

These charts highlight why it is risky to depend on a single upstream API. MultiRoute’s client-side and platform-level failover layers are designed to turn charts like these into operational noise that your users never see, by automatically routing around incidents when they happen.

Client-side failover with the Python SDK

The multiroute Python SDK gives you client-side failover as a final safety net on top of MultiRoute’s platform routing.

Even though MultiRoute is built for high availability, no one can promise 100% uptime for any API. When MultiRoute or a provider has a bad day, the SDK can automatically fall back to calling the underlying provider directly (using your provider API keys), so you keep serving users instead of surfacing our incident to them.

If you want to see exactly how this works, including code samples see:

multiroutePython SDK on GitHub- The SDK’s failover behavior documentation in that repo

Platform-level routing and failover

Beyond the client, MultiRoute’s platform handles routing, timeouts, retries, and fallbacks across multiple providers and models.

The high-level flow for a typical request to /openai/v1/chat/completions is:

- Your app (or SDK) sends a request to

https://api.multiroute.ai/openai/v1/chat/completions. - MultiRoute identifies your project and routing configuration.

- A model and provider are selected according to your configured priorities and weights.

- Timeouts and retries are applied for the chosen provider.

- If the provider is unhealthy or repeatedly fails with retryable errors, MultiRoute may fail over to a fallback model or provider.

- The final response (or error) is returned to your application.

For a deeper dive into how routing behaves, see:

Request lifecycle with both layers

When you use the multiroute SDK with a MultiRoute API key, your request effectively has two layers of protection:

-

Client layer

- App code →

multirouteclient. - If MultiRoute is unreachable or returns certain retryable errors, the SDK retries the call directly against the upstream provider.

- App code →

-

Platform layer

- App code / SDK →

api.multiroute.ai. - MultiRoute applies routing, timeouts, retries, and failover across configured providers and models.

- App code / SDK →

This combination reduces the chances that a single network hop, provider, or region issue will become a user-visible failure.

Best practices for using failover

To get the most out of failover protection:

- Configure viable fallbacks for critical workloads.

- Avoid having a single primary model with no backup for user-facing paths.

- Use routing profiles to define primary vs. secondary models and providers.

- Design requests to be idempotent where possible.

- Retries and failovers may result in the same logical request being sent more than once.

- For operations with side effects, use idempotency keys or unique request identifiers in your own systems.

- Tune timeouts by use case.

- For interactive UI calls, prefer shorter timeouts and more aggressive failover.

- For offline or batch jobs, you can allow longer timeouts and more conservative failover behavior.

- Align client retries with platform behavior.

- Avoid adding unbounded client-side retry loops on top of MultiRoute’s own retries and failover.

- Prefer a small, well-defined number of retries with exponential backoff.